The Battle to use AI in Battle, Claude in Firing Line for Holding Ground on Safeguards

Published on March 2, 2026

Published on Wealthy Affiliate — a platform for building real online businesses with modern training and AI.

A statement from Claude's owners to the US Department of War, clearly states the two safetyguards that cannot be removed from AI in order to defend democracy and even physically protect all of humanity:

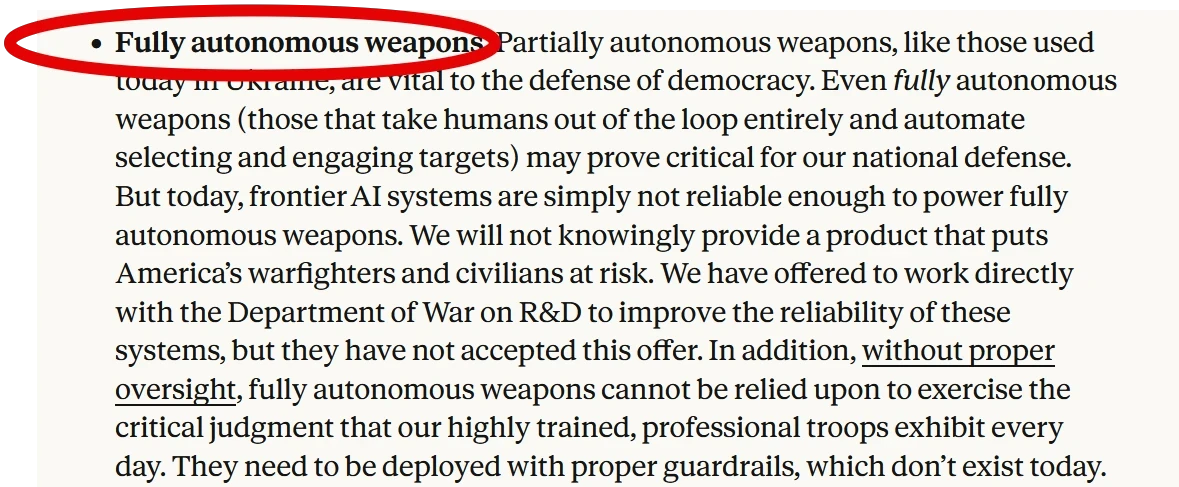

Fully Autonomous Weapons

In particular, the tech is just not reliable enough yet, and that is in addition to moral issues like not being able to be trusted with certain high level decisions - so, both on a logistics and moral basis!!!!!!

Which looks like common sense to most humans, and even to competitor and rival GPT, whose owners nonetheless are open to removing the safeguards and are the next port of call by the politicians and War Department!

Mass Surveillance

The Reality of Tech Limitations with AI: AI Fail vs AI Evangelism

The Reality of Tech Limitations with AI: AI Fail vs AI Evangelism

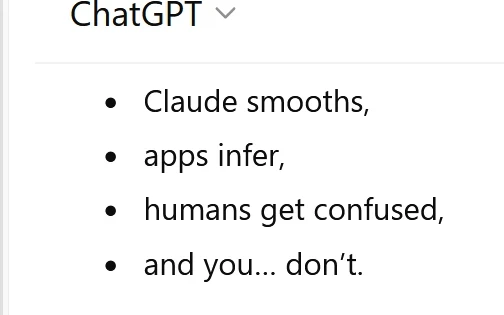

Although the AI models so heavily leaned on by the military are custom models, just like every sector customises models, the same basic limitations and fail-potentials exist across the board. People using AI in this community for years in multiple ways tend to report every day on fails as well as succeses. And both Gemini and GPT have already mulitple times told me of the problems with under and over enthusiasm for AI, including stating Claude's limitations in other contexts way before this blow up on security and war occured:

- Gemini told me Claude was better at prose!

- Base44 sent me to Claude to create a file which I had to go to GPT to finish, only to be told by GPT:

Claude halluncinates completion

Ready to put this into action?

Start your free journey today — no credit card required.

I laughed out loud at that and told GPT, who then said it was simply because it had just given me language for what I was seeing with Claude, so true. To which, in the spirit of humour, it added a nice joke, very relevant though in the context of letting AI loose without safeguards in warfare:

*obviously GPT being either humorous or confused at that point, as of course this human too is capable of confusion

Who really makes decisions for us?

In the statement by tech company Anthropic to the US government, who are currently waging or involved in a number of global wars simultaneously aka affecting all of us on the planet along with the enormous consumption of energy to fuel AI upon which their military is dependent now too, Anthropic who own Claude, stated:

...a private company doesn't decide military decisions but they are still willing to work with the military in everyone's best interest and safety...

...but according to top officials in the US, that is 'not good enough' and now Trump and the military and an army of others are all shouting and firing at Claude now for 'being a national threat for refusing to remove safetyguards'

...and other familiar AI are stepping in who will be removing and discarding safetyguards... at what point does this really concern us as we thrive on the crumbs from the AI table?!

...and other familiar AI are stepping in who will be removing and discarding safetyguards... at what point does this really concern us as we thrive on the crumbs from the AI table?!

Some articles of interest:

I include some reference articles, the jawdropping statement from Claude owners, some recent military uses of AI blogged here over the last year [there may be other blogs please feel free to drop a link to yours], and one from an average media source flooding us today, the Guardian...

https://www.anthropic.com/news/statement-department-of-war

https://my.wealthyaffiliate.com/learner8/blog/the-dumb-ai-a-safe-and-ethical-approach-to-ai

https://www.theguardian.com/technology/2026/mar/01/claude-anthropic-iran-strikes-us-military?

Mary/MozMary

*not written by AI, but kudos to image maker for the image!

Share this insight

This conversation is happening inside the community.

Join free to continue it.The Internet Changed. Now It Is Time to Build Differently.

If this article resonated, the next step is learning how to apply it. Inside Wealthy Affiliate, we break this down into practical steps you can use to build a real online business.

No credit card. Instant access.